- Blog

- Addictive drums 2 mac crack

- Cara ngisi cash pb garena

- How to give yourself weapons in borderlands 2 cheat engine

- Epson stylus photo t60 resetter program

- Garmin forerunner 35

- Watch mahabharat star plus episodes

- Hwmonitor pro 1-31 key

- Victoria 2 wiki food

- How to use adobe premiere pro

- Cheat engine 5-5 crack

- Usps postcard rate

- Juvenile 400 degreez hot boyz

- Tally erp 9 stock entry

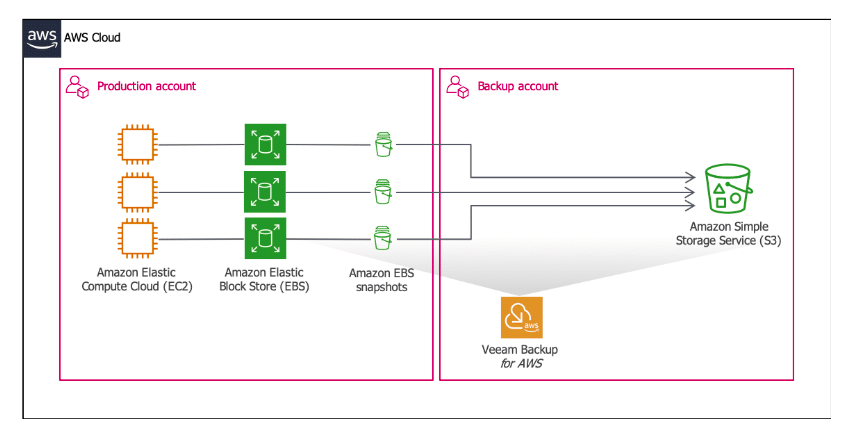

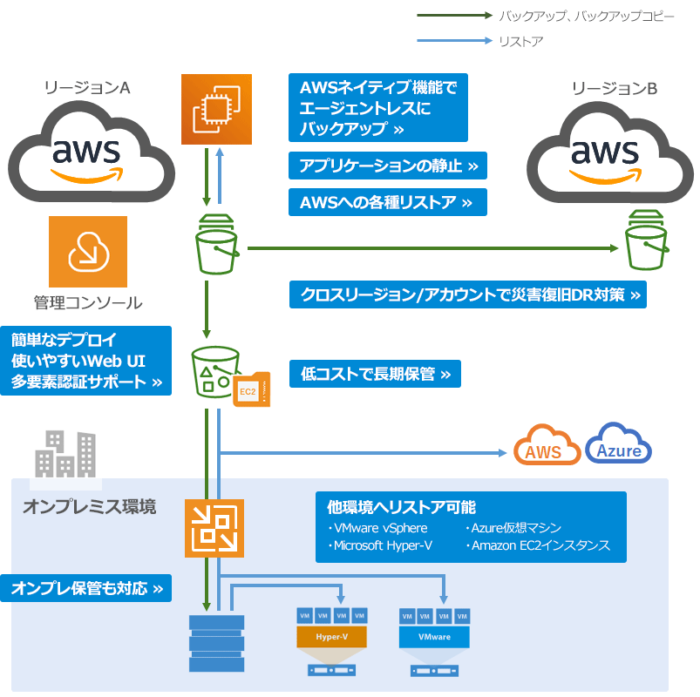

- Veeam backup to aws s3 bucket

- Daz 3d animation porn

It’s off by default, but both Amazon and us recommend that you enable it if you can spare the storage increase. With a lifecycle policy in place (more on that below) bucket versioning shouldn’t cost much extra as old versions won’t be stored for long. When versioning is enabled, rather than deleting objects directly, S3 marks the object with a “Deletion Marker” that causes it to act like it’s gone, but in the event that you didn’t mean to delete it, it’s reversible. You can also fetch previous versions at any time by passing that as a parameter to the GET request. It stores every different version of each object, so if you accidentally overwrite it, you can restore a previous version. To protect against this, S3 has a feature called Object Versioning. This is the scenario that you should be worried about. It’s much, much more likely that you, or someone else with access, will accidentally delete something, or overwrite an important object with garbage data. What S3 doesn’t protect you from is yourself. Unlike EBS-backed data volumes, which are stored in one place and can fail completely, S3 is already “backing up your data.” Data in S3 is stored in three or more Availability Zones, which means even in the event one of them burns down, you still have two more backups. It’s used for backups, so it doesn’t make much sense to backup your backup unless you’re really paranoid about losing your data.Īnd while S3 data is definitely safe from individual drive failures due to RAID and other backups, it’s also safe from disaster scenarios like widespread outages or warehouse failure. Let’s make one thing clear first-data in S3 is incredibly safe. Prevent Accidental Deletion with Object Versioning

But, it doesn’t protect from accidental deletions or overwrites, and for mission critical data, you can pay extra to have the bucket replicated across regions. At first, this can seem a bit paradoxical after all, S3 is usually used as a backup for other services.

- Blog

- Addictive drums 2 mac crack

- Cara ngisi cash pb garena

- How to give yourself weapons in borderlands 2 cheat engine

- Epson stylus photo t60 resetter program

- Garmin forerunner 35

- Watch mahabharat star plus episodes

- Hwmonitor pro 1-31 key

- Victoria 2 wiki food

- How to use adobe premiere pro

- Cheat engine 5-5 crack

- Usps postcard rate

- Juvenile 400 degreez hot boyz

- Tally erp 9 stock entry

- Veeam backup to aws s3 bucket

- Daz 3d animation porn